If you want the short answer, start with Playwright MCP. It is the best default for most developers because it is local, well-documented, and predictable. Choose Browserbase for cloud scale, mcp-chrome for working inside an already logged-in browser, Browser Use for persistent agent sessions, and Chrome DevTools MCP for debugging and performance audits.

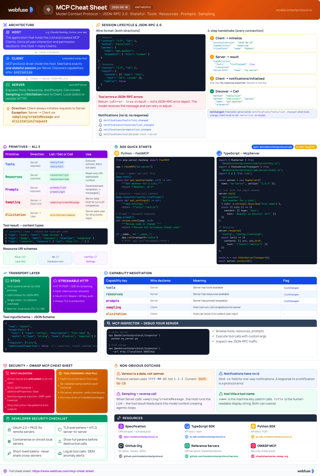

Model Context Protocol (MCP) is the standard that makes those connections possible. Introduced in late 2024, it gives AI hosts a consistent way to call external tools such as browsers, databases, and local files through a client-server model. If you want a faster protocol overview before comparing servers, start with our MCP Cheat Sheet.

MCP Cheat Sheet

Quick reference for MCP architecture, tools, resources, prompts, and secure transport choices.

View cheat sheetNot every MCP server is built for the same job. Here is the fast breakdown before the deeper comparison:

- Best overall for most developers: Playwright MCP

- Best for cloud scale: Browserbase MCP

- Best for local logged-in browsing: mcp-chrome

- Best for persistent agent workflows: Browser Use MCP

- Best for debugging and performance audits: Chrome DevTools MCP

Quick Comparison

| MCP Server | Best For | Tradeoff |

|---|---|---|

| Playwright MCP | Most developers who want a reliable default for browser automation | Requires local setup and some manual configuration |

| Browserbase MCP | Teams that want cloud-hosted browser automation at scale | Adds API costs and depends on external services |

| mcp-chrome | Working inside your existing logged-in Chrome session | Needs a manual extension setup and only fits local use |

| Browser Use MCP | Persistent workflows that need saved sessions and long-running tasks | Has more moving parts than simpler local tools |

| Chrome DevTools MCP | Debugging, performance audits, and technical browser analysis | Less suited for general-purpose multi-step automation |

How We Chose These MCP Servers

We picked these five tools based on the criteria most teams actually care about when choosing an MCP server for browser automation: reliability, ease of setup, support for real browser workflows, debugging visibility, and how well each option fits a specific use case like local development, cloud scale, persistent sessions, or technical audits.

These five servers are among the stronger options we found in 2026 for real-world AI agent browser workflows.

We evaluated them based on:

- Setup friction: how much work it takes to install, configure, and connect the server

- Workflow fit: whether the tool is best for local use, cloud scale, logged-in browsing, persistence, or debugging

- Control model: whether it relies on structured browser actions, natural-language instructions, or browser-native debugging signals

- Operational tradeoffs: costs, external dependencies, browser limitations, and day-to-day usability

How MCP Works

MCP uses a client-server architecture where an AI host connects to servers that expose browser actions as callable tools. The host handles the model, the server handles the browser, and the two communicate over JSON-RPC 2.0. In practice, that lets an AI navigate pages, click buttons, fill forms, and extract data without custom glue code.

Standardizing these interactions helps developers avoid writing custom code for every new integration. By 2026, major companies such as Microsoft and Google had publicly shown support for MCP through integrations and ecosystem work, helping agents connect to live tools and data sources.

- Standardized Integration: Uses JSON-RPC 2.0 to create a predictable way for models to talk to external data.

- Context Efficiency: Servers provide the AI with only the data it needs to complete a task.

- Agentic Workflows: Supports multi-step actions like filling forms or scraping data.

- Secure Access: Keeps credentials and sensitive data on the server side rather than in the model prompt.

Playwright MCP Server (Microsoft)

Microsoft built Playwright MCP as a practical bridge between AI hosts and modern browsers. Under the hood it uses Playwright for navigation and interaction, but the real advantage is how efficiently it packages page state for the model. The result is a setup that feels closer to reliable test automation than experimental agent tooling. If you are still deciding whether Playwright is the right automation foundation, see our comparison of Playwright vs Puppeteer for AI agent control.

Instead of sending raw HTML or screenshots for every step, the server relies on accessibility snapshots. That gives the model a structured, token-efficient view of the page while preserving enough detail for forms, buttons, and navigation. It also supports multiple browser engines, including Chromium, Firefox, WebKit, and Microsoft Edge, and runs on Node.js 18 or higher.

Available Tools for Browser Interaction

The server exposes a focused set of tools for the core browser actions most agents need.

- browser_navigate: Visits a specific URL and waits for the page to load.

- browser_click: Simulates a mouse click on an element identified by a selector.

- browser_fill_form: Inputs text into form fields or text areas.

- browser_snapshot: Captures the current state of the page using the accessibility tree.

- browser_console_messages: Retrieves logs from the browser console to check for errors.

- browser_network_requests: Monitors data moving between the browser and the server.

- browser_verify_element_visible: Confirms if a specific button or text appears on the screen.

Simple Integration and Configuration

Setup is straightforward if you already have Node.js. Most users run it through npx and add a small MCP entry in their client config. In current releases, Playwright MCP can also expose an HTTP MCP endpoint directly, which makes local connection simpler for clients that prefer URL-based configuration.

{

"mcpServers": {

"playwright": {

"command": "npx",

"args": ["-y", "@playwright/mcp@latest"]

}

}

}

Docker is a good fallback if your local machine does not already have the browser dependencies Playwright needs. The official Microsoft image includes the required drivers. Use docker run -i --rm mcr.microsoft.com/playwright/mcp to start it.

Practical Implementation Process

A typical flow is simple: navigate, take a snapshot, act, then verify with another snapshot.

For example, logging into a site usually means identifying the username and password fields from the snapshot, filling them, clicking the submit button, and checking the next snapshot to confirm the dashboard loaded.

Testing and Debugging the Server

Most debugging comes down to selector failures, timeouts, or popups. Headed mode helps because you can see where the agent clicks and where it gets stuck.

The browser_console_messages tool provides information about JavaScript failures on the page. If a button does not work, the console logs might show a blocked script. Capturing a trace is another method for deep analysis. To record one, enable the DevTools capability and use the tracing tools exposed by the server, such as browser_start_tracing and browser_stop_tracing. You can then open the resulting trace in the Playwright Trace Viewer to see a timeline of the agent's work.

Technical Capabilities and Limits

One of Playwright MCP's biggest strengths is consistency. It handles structured content like tables and lists without forcing the model to guess its way through raw markup, which usually leads to cleaner extraction and fewer brittle steps.

The server has some limitations. The Docker version only supports headless Chromium, which might behave differently than a real browser. Advanced features like coordinate-based clicking require specific flags during startup. A useful part of the configuration is setting allowed or blocked origins to limit where the agent should navigate, though these filters should be treated as guardrails rather than a hard security boundary.

Comparison of Pros and Cons

The Playwright MCP server provides a stable foundation for web tasks. Its popularity comes from the strong support of the Playwright community and its ability to work with many browsers.

Pros of Playwright MCP:

- Efficiency: Accessibility snapshots use fewer tokens than HTML.

- Engine Support: Works with Chromium, Firefox, and WebKit.

- Debugging: Offers detailed traces and console logs.

- Deterministic: Actions are precise and rely on CSS or XPath selectors.

Cons of Playwright MCP:

- Setup: Requires Node.js or Docker knowledge for initial configuration.

- Docker Limits: Headless mode is the only option in standard containers.

- Manual Flags: Some features are off by default and need manual activation.

Best for: developers who want the safest default choice for local browser automation, testing, and repeatable workflows.

Put Your AI Agent in Control of Any Browser

Webfuse gives your AI agent a reliable, structured view of any website - no fragile selectors, no DOM chaos. Pair it with any MCP server to build agents that actually work in production.

Browserbase MCP Server

Browserbase is the most infrastructure-friendly option in this list. Instead of asking you to run browsers locally, it gives you a managed cloud environment and layers Stagehand on top so agents can act on pages through higher-level instructions rather than selector-heavy scripts.

The browser sessions run remotely and are controlled through standard MCP tools, so your local machine is mostly out of the critical path. That makes Browserbase attractive for teams running many concurrent agents or building workflows that need hosted reliability.

Core Features for Remote Automation

This server exposes a compact set of Stagehand-powered tools for navigation, actions, observation, extraction, and session management. It combines traditional automation with AI-assisted page understanding.

- navigate: Moves the browser to a specific web address.

- act: Performs an action based on a text instruction like "click the sign-up button."

- observe: Returns a structured view of the current page for the agent.

- extract: Pulls structured data from a page without needing a predefined schema.

- start: Opens a new browser session.

- end: Ends the active browser session to save resources.

Technical Setup and API Integration

Using the Browserbase server requires an active account, API keys, and a small MCP config. Browserbase supports both hosted and local deployment paths, but the managed Browserbase environment is still central to its setup model.

{

"mcpServers": {

"browserbase": {

"command": "npx",

"args": ["-y", "@browserbasehq/mcp-server-browserbase"],

"env": {

"BROWSERBASE_API_KEY": "YOUR_KEY",

"GEMINI_API_KEY": "YOUR_AI_KEY"

}

}

}

}

At the time of writing, Browserbase's documentation lists google/gemini-2.5-flash-lite as the default Stagehand model. This model helps Stagehand decide which elements to click and how to extract data. You can change the model if you prefer using OpenAI or Anthropic for these background tasks.

Practical Implementation for Web Agents

The typical flow is to start a session, navigate to the page, and let act handle the messy part of interaction.

For example, an agent looking for flights could issue an instruction like "type London in the departure field." Browserbase maps that request to the right element even when the page uses unstable classes or dynamic IDs, then extract can pull prices and schedules into a format the model can compare directly.

Closing the session matters because idle sessions still consume resources and can add cost. The default viewport is 1024x768, but you can change it when responsive layouts affect the workflow.

Verification and Testing Methods

Testing Browserbase mostly means validating the handoff between your client and the hosted browser. Disabling headless mode in the dashboard makes it easier to see whether the agent is blocked by the site or simply making a bad decision.

The server provides logs for every Stagehand step. If an action fails, those logs help explain what the model tried to do and why it missed, which makes it easier to refine prompts or switch to a more explicit workflow.

Browserbase offers stealth-related options on some plans to reduce common bot-detection signals, but results vary by site and should not be treated as a guarantee against detection. This can help when scraping data from sites with stricter security measures.

Server Comparison and Evaluation

Browserbase offers a different experience compared to local servers like Playwright. It focuses on ease of use through natural language rather than technical precision.

Pros of Browserbase MCP:

- Cloud Hosting: No local browser installation or maintenance is needed.

- Natural Language: Actions use simple text instructions instead of complex selectors.

- Stealth Options: Offers features intended to reduce common bot-detection signals.

- Vision Integration: Annotated screenshots help the agent understand layouts.

Cons of Browserbase MCP:

- Costs: Requires a paid plan for high-volume usage or advanced features.

- Internet Reliance: Performance depends on the speed of the cloud connection.

- External Keys: Needs multiple API keys to function.

Best for: teams that care more about fast iteration and cloud scale than low-level browser control.

mcp-chrome (Chrome MCP Server)

Most browser automation tools start from a blank session. mcp-chrome does the opposite: it plugs into the browser you are already using. That means the agent can work with your existing tabs, active logins, and saved state instead of rebuilding context from scratch.

The bridge-and-extension design keeps control local rather than routing traffic through a hosted browser service. That is a meaningful privacy advantage, especially for internal workflows, although it still requires trust in the MCP client because the client can access whatever browser data the granted tools expose.

Available Tools and Capabilities

The server provides more than 20 tools for inspecting the browser and acting on what it finds.

- Tab Management: Lists open tabs and switches between them.

- Semantic Search: Finds information across all open windows using a vector database.

- Screenshot: Captures an image of the current page.

- Network Capture: Tracks data moving between the browser and websites.

- History and Bookmarks: Reads saved links and past visits.

- Click and Fill: Handles buttons and text input fields.

- Console Logs: Provides access to the JavaScript console for debugging.

Technical Architecture and Speed

The bridge application uses Node.js 20 and TypeScript. It sits between the AI host and the extension over a local HTTP connection, and uses WebAssembly with SIMD support to improve search performance on supported systems.

Local processing keeps the browsing session private. The extension sends data to the bridge, and the bridge passes it to the AI client. This direct link can reduce latency compared with cloud-based browser tools because the server stays on the local machine.

Setup Process for the Bridge and Extension

Setup takes more work than most tools here because you need both the local bridge and the browser extension. In practice, that makes mcp-chrome one of the more manual setups in this list.

- Install the bridge tool by running

pnpm install -g mcp-chrome-bridge. - Register the tool with the command

mcp-chrome-bridge register. - Download the extension from the official source.

- Go to the Chrome extensions page and turn on Developer Mode.

- Select "Load unpacked" and pick the folder for the extension.

- Open the extension in the browser and verify the bridge is running.

Client Configuration and Activation

The MCP client connects to mcp-chrome over streamable HTTP rather than the stdio transport used by many local servers.

{

"mcpServers": {

"chrome-mcp-server": {

"type": "streamableHttp",

"url": "http://127.0.0.1:12306/mcp"

}

}

}

Users must click the "Connect" button in the extension interface. Until that happens, the MCP client will not see the available tools. Once connected, the extension icon changes color to indicate an active session.

Practical Implementation Example

This setup is especially useful when the agent needs to operate inside sites where you are already signed in. Instead of re-authenticating through a fresh browser context, it can move across your existing tabs and continue from where you left off.

A user can say: "Find my bank statement tab and tell me the last three transactions." The agent uses semantic search across open tabs, switches to the right one, and reads the relevant page content without making you log in again.

It also works well for developer tasks like checking which API call is taking the most time on a page by capturing and analyzing live network activity.

Testing the Integration

Verifying the setup mostly means checking the bridge logs and extension status. If a tool fails, the terminal usually shows why, and you can watch the tabs switch as the agent moves between them.

For a simple test, ask the agent to list your open tabs. If that works, the connection is active. From there, you can test click actions or semantic search across tabs without entering a URL manually.

Evaluation of the Server

mcp-chrome is a strong option for local automation. It focuses on using existing resources rather than creating new ones.

Pros of mcp-chrome:

- Login Reuse: Works with active accounts and saved data.

- Local Privacy: Data stays on the user's computer.

- Performance: Local communication can reduce latency compared with hosted browser tools.

- Multi-tab Search: Search can feel responsive on supported hardware.

- Developer Friendly: Access to console logs and network data.

Cons of mcp-chrome:

- Manual Setup: Loading an extension manually is required, and the bridge can add native install friction depending on your local Node environment.

- Browser Limit: Only works with Chrome and Chromium-based browsers.

- Early Development: The tool is still in an early stage of release.

- Single User: Not designed for server-side or multi-user environments.

Best for: personal or internal workflows where the agent needs access to the browser session you already use every day.

Browser Use MCP Server

Browser Use sits between a low-level automation tool and a full hosted agent platform. It gives you a local mode for direct control, a cloud mode for managed execution, and stronger support for long-running tasks than most of the other MCP browser options.

Its toolset spans both direct browser actions and higher-level task orchestration, which is why it stands out for workflows that are too complex to script click by click.

- browser_task: Accepts a high-level instruction to complete a multi-step web action.

- navigate: Directs the browser to a specific URL.

- click: Interacts with a specific element on the page.

- extract_content: Pulls text and data from the active tab.

- list_profiles: Shows saved browser configurations and authentication states.

- monitor_task: Tracks the progress of a running action using a unique ID.

Configuration for Local and Cloud Environments

The local version runs through uvx, which handles the Python environment and dependencies for you. It makes sense if you want to keep browsing data on your own machine, but it also means bringing your own model keys because the local server is only the bridge.

The cloud version uses HTTP and an API key from the Browser Use dashboard. That is also where Browser Use's persistence story is strongest: current docs describe persistent profiles and longer-lived cloud sessions more clearly than the local stdio setup. If your agent needs to stay logged in across sessions, Browser Use is much better aligned with that workflow than tools that default to fresh browser contexts.

{

"mcpServers": {

"browser-use": {

"command": "uvx",

"args": ["--from", "browser-use[cli]", "browser-use", "--mcp"]

}

}

}

Operational Details and Management

Setting BROWSER_USE_HEADLESS=false lets you watch the browser directly, which helps when the agent gets stuck on a captcha or a messy workflow. The server also exposes status updates, logs, and session messages so you can inspect what happened during a task.

Integration with ChatGPT, Claude Desktop, or Cursor requires client-specific MCP settings. For hosted MCP clients, Browser Use documents connecting through its HTTP endpoint with an API key header. In local mode, you should also expect to provide your own LLM API key rather than getting one bundled with the MCP server. You can also raise the logging level to debug if you need to inspect the exact messages sent between host and browser.

browser_task is the feature that defines the product. Instead of micromanaging every click, you can hand the agent a goal like finding the cheapest price across several stores and let the server manage navigation, extraction, and progress updates through monitor_task.

Testing the Automation Flow

Testing mostly means running a simple task and checking the logs. Setting the logging level to debug shows every request and response between the AI and the browser, which helps explain whether a task failed because of the page, the prompt, or the workflow.

A basic test is to ask the agent to search for a term and return the result titles. Checking list_profiles also confirms whether the server can access saved session data instead of starting from a fresh browser instance.

You can also test error handling by giving the agent an impossible task, such as navigating to a site that does not exist, and checking whether the failure is reported cleanly instead of wasting extra steps.

Where Browser Use Fits Best

Browser Use is strongest when you need more than one-off page actions. Its main advantage is persistence: profiles, cloud sessions, and long-running tasks are built into the product rather than added as a separate layer.

- Hybrid Options: Choice between local hardware or cloud scalability.

- Real-time Monitoring: Tools to track the status of long-running tasks.

- Persistent Profiles: Keeps logins and cookies across sessions.

- High-level Logic:

browser_taskhandles complex instructions. - Tradeoff: Local mode needs your own model keys, while cloud mode adds API costs and extra profile management.

Best for: agents that need to resume work across sessions instead of starting from scratch every time.

Chrome DevTools MCP Server

Most MCP browser servers are designed to finish tasks. Chrome DevTools MCP is different: it is designed to inspect why a page behaves the way it does. It plugs into the native Chrome DevTools Protocol, which makes it more useful for debugging, auditing, and performance work than for general-purpose browsing.

By connecting to Chrome's remote debugging interface, the server exposes the same class of signals a developer would inspect manually in DevTools. That makes it a much better fit for technical troubleshooting and performance analysis than for UI-heavy multi-step automation.

Capabilities for Deep Browser Analysis

The server provides tools for inspecting what the page is doing behind the UI layer.

- performance_start_trace: Records every event in the browser engine to find scripts that slow down the page.

- console_logs: Reads every warning and error message generated by the site scripts.

- network_audit: Checks if images, scripts, or fonts fail to load or take too much time.

- dom_inspection: Looks at the HTML structure to find elements that cause layout shifts.

- lcp_measurement: Evaluates the Largest Contentful Paint to judge the speed of the site.

Technical Communication and Integration

The Chrome DevTools MCP server is an Apache 2.0 licensed project that runs locally and connects to the browser over WebSocket. It is currently in preview, so the feature set may change as the project matures.

Most users run this server with npx, but Chrome still needs the remote debugging flag enabled so the server can attach to it and translate DevTools data into something the model can analyze.

{

"mcpServers": {

"chrome-devtools": {

"command": "npx",

"args": ["-y", "chrome-devtools-mcp@latest"]

}

}

}

Practical Implementation for Site Audits

In a real workflow, the agent usually attaches to an active tab and starts with the fastest signal available: console output. If a page is broken, that alone may be enough to surface the failing script, file name, and error context before the agent does anything more expensive.

For performance work, the agent can start a trace, reload the page, and then look for long tasks that block the main thread. That kind of evidence is what turns a vague "this page feels slow" complaint into something actionable.

The agent can also simulate different device types and inspect the DOM to spot layout or responsiveness issues that are hard to catch from normal browsing alone.

Testing and System Verification

Testing the server requires a browser window and the correct startup flags. Start Chrome with --remote-debugging-port=9222, then verify the connection by asking the AI to list the open tabs.

A simple test for the console tool is to ask the agent to find JavaScript errors on a page with a known script issue. For performance testing, ask it to measure LCP on a target page and return the value.

You can also check the network tool by asking for all images on a page along with their file sizes or failed request status codes.

Comparative Strengths and Weaknesses

The Chrome DevTools MCP server fills a unique role compared to general automation tools. It focuses on the "why" of a page rather than just the "what."

Pros of Chrome DevTools MCP:

- Engine Integration: Uses native tools for the highest possible accuracy.

- Performance Focus: Best choice for measuring Core Web Vitals.

- Error Detection: Finds hidden bugs in scripts and network calls.

- Audit Logic: Suitable for professional quality assurance work.

Cons of Chrome DevTools MCP:

- Preview Status: Because the tool is still in preview, behavior and available features may change.

- Narrow Scope: It has fewer tools for complex form filling than other servers.

- Chrome Only: It does not work with Firefox or Safari.

Best for: debugging, performance analysis, and QA workflows where browser internals matter more than general automation convenience.

Comparison of All Browser Automation Servers

If you only need a quick recommendation, use this shortlist instead of reading every section again.

Best overall: Playwright MCP

The most balanced option for most teams. It is local, predictable, well-documented, and efficient on tokens.

- Supports multiple engines like Chromium and WebKit.

- Uses accessibility trees to save on AI token costs.

- Offers detailed traces for fixing broken steps.

- Requires a local Node.js installation.

Best for cloud scale: Browserbase MCP

The easiest choice when you want hosted browsers, natural-language actions, and less infrastructure work.

- Operates without any local browser installation.

- Includes tools to avoid detection by security scripts.

- Uses external AI models to plan movements.

- Requires a paid subscription for high usage levels.

Best for local logged-in browsing: mcp-chrome

The best fit when the agent needs to work inside your existing Chrome session with real tabs and saved logins.

- Reuses your current browser sessions and logins.

- Performs fast searches across all open tabs.

- Keeps user data away from third-party cloud servers.

- Needs a manual installation of a Chrome extension.

Best for persistent workflows: Browser Use MCP

A stronger option than Playwright when persistence, profile reuse, and long-running tasks matter more than minimal setup.

- Supports persistent profiles for staying logged into sites.

- Handles high-level goals with a single command.

- Connects to hosted MCP clients through an HTTP endpoint with API-key-based authentication.

- Needs separate API keys for the AI models.

Best for debugging and audits: Chrome DevTools MCP

This is the specialist option for inspecting what the page is doing internally, not just automating clicks.

- Gives the agent access to the console and network logs.

- Measures page load speeds and performance metrics.

- Identifies errors in the site's JavaScript files.

- Lacks the broad automation tools found in other servers.

The following table gives a more detailed side-by-side view if your decision depends on setup model, persistence, or debugging depth.

Key Differences at a Glance

- Playwright MCP: Best when you want structured browser control, predictable behavior, and a strong local default.

- Browserbase MCP: Best when you want hosted browser infrastructure, natural-language actions, and easier cloud scaling.

- mcp-chrome: Best when the agent needs access to your existing Chrome tabs, logins, and local browser state.

- Browser Use MCP: Best when persistence, saved profiles, and long-running browser tasks matter more than minimal setup.

- Chrome DevTools MCP: Best when you care more about debugging, performance audits, and browser internals than general automation convenience.

How These Tools Differ in Practice

The real choice is not which MCP server can click buttons. It is where the browser runs, how much session state you need to keep, and how much debugging depth your workflow requires.

- Playwright MCP is the most flexible local option. It is the best fit when you want Playwright-style control, predictable snapshots, and the ability to choose how the browser is launched.

- Browserbase MCP is the clearest hosted option. It makes the most sense when you want managed browser infrastructure, built-in session handling, and less local operational work.

- mcp-chrome is the strongest option for working inside an already logged-in Chrome profile. Its biggest advantage is access to your real tabs, cookies, and browser state rather than a fresh automation session.

- Browser Use MCP sits between direct browser control and a hosted agent workflow. It is a better fit when persistence and longer-running tasks matter more than minimal setup.

- Chrome DevTools MCP is the specialist choice for debugging. It is less about broad automation coverage and more about inspecting performance, console output, network activity, and browser internals.

Selecting the Best Server for Your Needs

Selecting the right server depends less on raw feature count and more on what kind of browser work your agent needs to do. If you are comparing MCP servers against broader browser-agent approaches, read our breakdown of agent browsers vs Puppeteer and Playwright.

- For Highly Scalable Cloud Operations: Browserbase is the cleaner pick for hosted browser execution, while Browser Use is stronger when those cloud tasks need persistent profiles and session reuse.

- For Local Privacy and Login Reuse: The mcp-chrome server allows the agent to work within your existing browser session.

- For Technical Debugging and Audits: Chrome DevTools MCP gives the agent the data needed to analyze page performance.

- For General Development and Testing: Playwright MCP remains a reliable standard for developers who want full control over the browser engine.

Transport also matters. Stdio is simple and common for local tools, while streamable HTTP is a better fit for persistent or remote setups. Regardless of which server you choose, review its security settings, keep both host and server updated, and treat navigation restrictions like origin allowlists as operational guardrails rather than a complete security boundary.

Conclusion

Browser automation over MCP is now practical enough that the hard part is not finding a tool that works. It is choosing the one that matches the way your agent actually needs to work.

If you want the safest default, start with Playwright MCP. If you want managed cloud browsers, choose Browserbase. If you need access to your real logged-in Chrome session, choose mcp-chrome. If persistence and longer-running workflows matter most, choose Browser Use. If your main goal is debugging, audits, and browser inspection, choose Chrome DevTools MCP.

That is the real shortcut: pick based on workflow, not feature count. The best MCP server is the one that matches where your browser runs, how much state you need to keep, and how much control or visibility you need day to day.

Frequently Asked Questions

Ready to Get Started?

14-day free trial

Stay Updated

Related Articles

DOM Downsampling for LLM-Based Web Agents

We propose D2Snap – a first-of-its-kind downsampling algorithm for DOMs. D2Snap can be used as a pre-processing technique for DOM snapshots to optimise web agency context quality and token costs.

A Gentle Introduction to AI Agents for the Web

LLMs only recently enabled serviceable web agents: autonomous systems that browse web on behalf of a human. Get started with fundamental methodology, key design challenges, and technological opportunities.