If you want the short answer first, here it is: Playwright is the better default for most AI agents, while Puppeteer is the better fit for Chromium-only, CDP-heavy workflows.

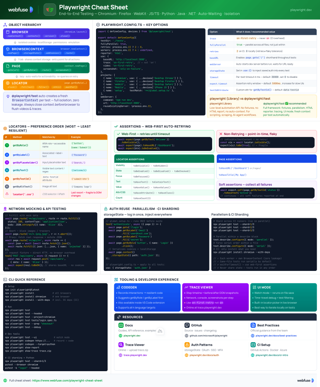

Playwright Cheat Sheet

Quick reference for Playwright locators, contexts, debugging tools, and best practices.

View cheat sheetThat is the real decision in 2026. Both tools are mature enough for production; the difference is where each is strongest:

- Choose Playwright if you want the most reliable general-purpose framework for agents that must survive dynamic pages, scale across many sessions, and run beyond Chromium.

- Choose Puppeteer if your stack is already centered on Chrome or Edge and you want more direct protocol-oriented control with less abstraction.

This guide compares them specifically for AI agent control, not just test automation.

What Changed in 2026?

The comparison is less simplistic than it used to be:

- Puppeteer is not just "Chromium-only" anymore. It now supports Chrome and Firefox, although Chromium remains its strongest path.

- Puppeteer also improved its locator story. It is no longer accurate to describe it as a framework where every interaction must be hand-managed with

waitForSelector(). - Playwright is still the stronger default for engineering teams because its reliability features, tracing, and cross-browser model are more cohesive.

- AI-agent teams increasingly need a layer above raw browser automation. We cover that tradeoff later in the article.

What Actually Matters for AI Agent Control

For AI agents, the most important evaluation criteria are usually these:

- Reliability on dynamic pages

Can the framework keep actions stable when the DOM updates, elements animate, or content arrives late? - Session isolation

Can you run many agents in parallel without cookie leakage, auth collisions, or excess browser overhead? - Observability

When an agent fails on step 7 of 14, can you tell whether the problem was timing, navigation, selectors, network state, or model reasoning? - Protocol access

How easily can you inspect requests, performance, service workers, or low-level browser state? - Browser coverage

Are you only automating Chrome, or do you need Firefox/WebKit coverage too? - LLM compatibility

How much work is required to turn raw browser state into something an LLM can reason over efficiently?

That is why the answer is not just "Playwright has auto-waiting" or "Puppeteer has CDP." The real ranking-worthy comparison is about operational reliability.

Playwright vs. Puppeteer: Quick Decision Matrix

| Scenario | Better Choice | Why |

|---|---|---|

| Most AI agents on modern websites | Playwright | Better default reliability, tracing, contexts, and browser coverage |

| Chromium-only agents with deep instrumentation | Puppeteer | More direct fit for CDP-heavy workflows |

| Cross-browser automation | Playwright | First-class Chromium, Firefox, and WebKit support |

| Dynamic SPAs with flaky timing | Playwright | Stronger actionability checks and debugging workflow |

| Lean Chrome scripting and screenshots/PDFs | Puppeteer | Lightweight and mature for Chromium tasks |

| Many isolated sessions in parallel | Slight edge: Playwright | Both support BrowserContexts, but Playwright's ergonomics are stronger |

| Agent-readable page state for LLMs | Neither by default | You often need an extra abstraction layer or agent-browser approach |

Side-by-Side Comparison for AI Agent Workflows

Browser Support

Playwright still has the cleaner browser support story. One API covers Chromium, Firefox, and WebKit, which matters if your agent needs to behave consistently across browser engines or you are validating flows that break outside Chrome.

Puppeteer now supports Chrome and Firefox, which is an important change from older comparisons. That improvement makes Puppeteer more relevant than outdated "Chromium-only" summaries suggest, but Playwright still offers broader browser coverage and a more unified cross-browser experience.

For AI agent teams, this matters most when your agents interact with customer-facing apps where browser behavior can differ in subtle but important ways.

Reliability on Dynamic Pages

This is where Playwright usually pulls ahead.

Modern agent workflows often fail not because the selector is wrong, but because the page is still hydrating, an overlay intercepts the click, the route change is delayed, or the UI changes between runs. Playwright's model is built around actionability checks, locators, and traceable execution. That gives you a more resilient base for long multi-step workflows.

Puppeteer has improved a lot here. Its locator APIs now support retrying and auto-wait behavior, so it is no longer fair to frame Puppeteer as purely "manual waits everywhere." Still, in day-to-day engineering, Playwright tends to give teams a stronger reliability baseline on complex SPAs.

The contrast is smaller than many articles claim, but it still exists:

// Playwright

const page = await context.newPage()

await page.goto('https://example.com/products')

await page.getByRole('button', { name: 'Load more' }).click()

const price = await page.locator('.price').textContent()

console.log(price)

// Puppeteer

const page = await browser.newPage()

await page.goto('https://example.com/products')

await page.locator('::-p-text(Load more)').click()

await page.waitForSelector('.price')

const price = await page.$eval('.price', (el) => el.textContent)

console.log(price)

Both examples can be robust. Playwright usually wins here because its debugging and recovery workflow is stronger when agent actions get flaky.

Debugging and Observability

For AI agent systems, debugging is not optional. You need to inspect what happened when the model chose the wrong button, the page silently redirected, or the UI was different from what your prompt assumed.

Playwright has the better integrated observability stack:

- Trace Viewer for step-by-step replay

- Inspector for interactive debugging

- Codegen for quickly generating and refining flows

- strong context around network, DOM state, screenshots, and action timing

Puppeteer can absolutely be debugged well, especially with Chrome DevTools and protocol-level instrumentation, but the workflow is less unified. For most teams, that means Playwright shortens time-to-fix when agent runs fail in production-like environments.

Protocol Access: CDP and BiDi

Puppeteer remains the more natural choice when your workflow is deeply tied to browser protocols.

In 2026, that means more than just Chrome DevTools Protocol (CDP):

- CDP remains central for Chromium-focused workflows such as detailed network inspection, performance profiling, and lower-level Chrome instrumentation.

- WebDriver BiDi also matters now, especially because Puppeteer uses BiDi for Firefox automation.

That nuance is important because the comparison is no longer simply "Puppeteer equals CDP only."

Playwright can also open raw CDP sessions on Chromium, so protocol-level access is not exclusive to Puppeteer. But if direct protocol work is central to your system, especially in Chromium-heavy environments, Puppeteer is often the more straightforward pick.

const browser = await puppeteer.launch()

const page = await browser.newPage()

const client = await page.createCDPSession()

await client.send('Network.enable')

client.on('Network.responseReceived', ({ response }) => {

if (response.url.includes('/api/')) {

console.log(`[Agent] ${response.url} -> HTTP ${response.status}`)

}

})

That remains one of Puppeteer's clearest strengths for browser-agent engineering.

Build smarter AI agents for the web

Webfuse gives your AI agents structured access to any website - no scraping fragility, no DOM chaos. Connect your agent to live web environments in minutes.

Scaling and Session Isolation

Older comparisons often overstate this difference. Both Playwright and Puppeteer support BrowserContexts, so both can isolate cookies, storage, and auth state without launching a full browser process for every session.

That said, Playwright still has a practical edge for large agent fleets because the ergonomics around contexts, tracing, and multi-browser support are stronger. If you are orchestrating many concurrent agents, Playwright tends to be easier to operate reliably.

const browser = await chromium.launch()

const results = await Promise.all(

targetUrls.map(async (url) => {

const context = await browser.newContext()

const page = await context.newPage()

await page.goto(url)

const heading = await page.locator('h1').textContent()

await context.close()

return { url, heading }

})

)

The important point is this: Playwright is not uniquely capable of isolated sessions, but it is usually the more ergonomic framework for running them well at scale.

Bot Detection, CAPTCHAs, and Stealth

This is a major gap in many comparison articles.

Neither Playwright nor Puppeteer magically solves anti-bot defenses. Both can be used inside more advanced scraping or agent infrastructure, but neither should be treated as a turnkey stealth layer.

In practice:

- Playwright does not automatically bypass bot detection just because it is newer.

- Puppeteer does not automatically lose just because it is more Chrome-oriented.

- successful production agents usually depend on a broader stack: browser hardening, proxy strategy, identity/session handling, and often infrastructure purpose-built for agent workflows.

If that is your actual use case, also read The Top 5 Best MCP Servers for AI Agent Browser Automation and Develop an AI Agent for Any Website with Webfuse.

Neither Tool Is Fully Agent-Native

This is the biggest nuance missing from many "Playwright vs Puppeteer" posts.

Both frameworks were designed primarily for engineers writing browser automation, not for LLMs reasoning over page state. You can absolutely build agent systems on top of them, but once you do, you often need to add your own layer for:

- compact page representations

- semantic element references

- persistent session orchestration

- tool abstractions that make sense to a model

- failure recovery between uncertain steps

That is why teams building browser-native AI systems increasingly compare Playwright and Puppeteer with agent-browser tools, MCP servers, or structured DOM interfaces rather than treating raw automation libraries as the whole solution.

How We Evaluated the Tradeoff

This comparison is based on the capabilities that matter most for production browser agents in 2026:

- current browser support and protocol models

- locator and waiting behavior

- debugging and trace tooling

- context/session isolation

- fit for LLM-driven browser workflows

The current reality is simple: Puppeteer has evolved, but Playwright is still the better default for most AI agent teams.

Final Verdict

If you are building an AI agent today and do not have a strong reason to choose otherwise, pick Playwright. It is the stronger default for most teams because it combines browser coverage, reliability on dynamic pages, observability, and maintainability better than Puppeteer.

Choose Puppeteer when your workflow is more Chromium-specific and protocol-heavy, especially if deep CDP access matters more than cross-browser resilience.

If your bigger challenge is giving an LLM a structured, efficient view of page state, you may need a layer above both tools.

Frequently Asked Questions

Related Articles

DOM Downsampling for LLM-Based Web Agents

We propose D2Snap – a first-of-its-kind downsampling algorithm for DOMs. D2Snap can be used as a pre-processing technique for DOM snapshots to optimise web agency context quality and token costs.

A Gentle Introduction to AI Agents for the Web

LLMs only recently enabled serviceable web agents: autonomous systems that browse web on behalf of a human. Get started with fundamental methodology, key design challenges, and technological opportunities.